16 metrics for tracking Collaborative Innovation performance

August 5, 2015 3 Comments

In a recent PwC survey, 61% of CEOs said innovation was a key priority for their company (pdf). The only surprising result there is that it wasn’t 100%. Innovation efforts come in a variety of forms: innovation and design labs, jobs-to-be-done analysis, corporate venturing, distributed employee experiments, open innovation, TRIZ, etc.

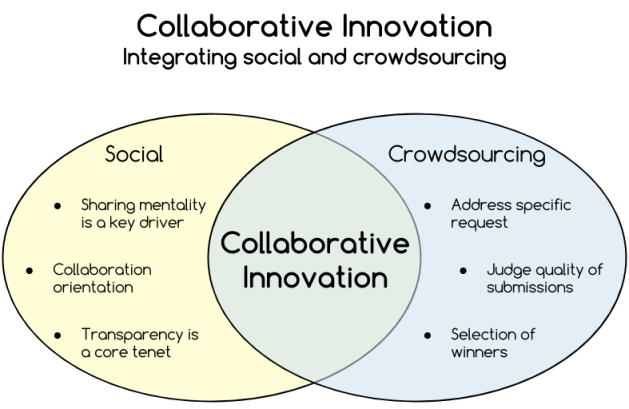

In this post, I want to focus on another type of innovation initiative: Collaborative Innovation. A good way to think about Collaborative Innovation is that it integrates social and crowdsourcing principles:

A definition I use for this approach:

Collaborative Innovation is defined as activities organizations use to improve their rates of innovation and problem solving by more effectively leveraging the diverse ideas and insights of employees, customers and partners.

While it seems straightforward, Collaborative Innovation is actually a fairly sophisticated activity. People with a cursory understanding say all you need to do is: (i) stand up an ideas portal; (ii) let people post ideas; (iii) collect votes on those ideas; and (iv) pick the winners.

Unfortunately, that’s just plain wrong. I’ve seen too many cases where organizations launch idea portals, only to see them die off six months later. The practice of Collaborative Innovation is a rich realm, with solid results for those who apply it thoughtfully.

This post is a look at several key metrics that corporate innovation teams should focus on as they lead Collaborative Innovation programs. The metrics are segmented by the different phases of innovation:

- Sourcing

- Decisioning

- Acting

The metrics below rest on two key assumptions: use of an innovation management software platform; use of campaigns to target innovation efforts.

Sourcing

Sourcing refers to the generation of ideas, as well as eliciting others’ insights about an idea.

Phase objectives

- Distinct, differentiated ideas

- Ideas matching needs of customers (incl. internal customers)

- Ideas matching the innovation appetite of the organization

- Capturing the cognitive diversity of participants

- Growing the culture of innovation

Metrics

| Metric | Description | Why |

| Trend in unique logins | Measure the ratio of logins/invited over time for multiple campaigns. Want to see a rise over time until reaching a steady state (~60%). |

|

| Trend in multiple logins | Determine the number of people who log in to each campaign 3 times or more. Divide these multi-login people by the total number of people logging in to each campaign. Look for increasing ratios over time. |

|

| Ratio of ideators to unique logins | Divide the number of people who post at least one idea by the number of unique logins. Want to see a rise over time until reaching a steady state (10 – 15%). |

|

| Average number comments per idea | Divide the number of comments by the number of ideas, per campaign. Target an average of 2 comments per idea. |

|

| Average number of replies per comment | Divide the number of comment replies by the number of comments. Target an average of 0.5 replies per comment. |

|

| Average number of votes per idea | Divide the number of votes by the number of ideas, per campaign. Target an average of 3 votes per idea. |

|

| Unique org units | departments | locations contributing | Count the number of different org units, departments and/or locations with at least one person posting an idea, posting a comment or voting. This count needs to be considered against the number of org units, departments or locations invited. |

|

Decisioning

Decisioning refers to identifying which ideas move forward for next steps. This phase is the bridge between getting a lot of different ideas, and determining which ones will be acted on.

Phase objectives

- Identify ideas presenting enough possibility to warrant further review

- Acknowledge value of community’s perspective

- Timely assessments of ideas

Metrics

| Metric | Description | Why |

| Ratio of ideas selected for further review | Some number of ideas submitted for each campaign will be selected for the next round of review. Calculate the ratio of selected ideas to total ideas submitted. Watch how this ratio changes over time. |

|

| Ratio of top 5 voted/commented ideas selected for further review | Of the ideas that were the top 5 for either votes or number of unique commenters, track how many were selected for further review. |

|

| Percentage of initially reviewed ideas sent back for iteration & information | Of the ideas that were selected for further assessment, track the number where the idea submitter (and team) are asked to iterate the idea and/or provide more information. |

|

| Time to complete decisions | Measure the time between selection of ideas for further review and selection of ideas to move forward into the Acting phase. The time will vary by the level of risk attendant to a campaign. |

|

| Ratio of reviewed ideas that advance to Acting phase | Divide the number of ideas selected to move into the Acting phase by the number of ideas selected for review. Watch this ratio over time. |

|

Acting

Acting refers to the activities to prove out an idea, develop it and prepare it for full launch. Or to learn why an idea won’t be feasible, ultimately.

Phase objectives

- Develop deeper understanding for whether the idea passes the three jobs-to-be-done tests that determine market adoption

- Optimize features that best deliver on the outcomes that the idea’s targeted beneficiaries have

- Maximize the probability of success by eliminating ideas that just aren’t working

Metrics

| Metric | Description | Why |

| Average number of experiments per idea | Tally the total number of experiments for a “class” of selected ideas for Acting phase, calculate the average per idea. |

|

| Time to make final decision on selected ideas | Track the amount of time between the decision to put an idea into the Acting phase, and the decision whether to pursue the idea at scale. |

|

| Ratio of ideas selected for full launch | Divide the number of ideas selected for full launch by the number of ideas selected for the Acting phase. Watch how this ratio tracks over time. |

|

| Projected and realized value of ideas that have been moved to full launch | Aggregate projected and realized value of the ideas that will be or have been put into full launch. |

|

The above list is solid foundation of metrics to track for your Collaborative Innovation program. It’s not exhaustive. And there are likely elements for each phase that will vary for each organization.

But these are good for watching how your program is tracking. Behind each metric, there are techniques to enhance outcomes. The key is knowing where to look.

I’m @bhc3 on Twitter.

Reblogged this on Tree-Magazine !.

Pingback: 16 metrics for tracking Collaborative Innovation performance | I'm Not Actually a Geek - CIOWaterCooler

Hi,this is the first time I am looking at the metrics for collaborative innovation. Good effort. The author has looked into all the micro level details of the innovation through collaboration process. There are a lot of group dynamics involved and making an innovation hub to work is in itself a herculean effort. People come from all sorts of backgrounds, culture and mindset. Making them to collaborate is difficult, unless there is a relational trigger among themselves. I have initiated couple of programs for innovation hubs within my business unit in my company. Many interesting challenges. Your chart of metrics will help me a lot. You diagram to integrate crowd sourcing with social media for collaborative innovation is appreciated.

What is interesting is that, you have added the “amount of time taken to decide on an idea” which sometimes takes ages for decision makers. I have learnt something today. I will definitely make use of this chart. thank you again. I happen to bump into your site from another site. I will visit again. Cheers, Ramkumar.